So often in life we find a really easy solution to something much later than would be ideal. Same happened today with trying to clear out an S3 bucket that was costing us a lot of money in storage.

We quickly wanted to find out what was taking up the bulk of the storage but we had thousands of folders. Normally I’d use a CLI command to do this, but sometimes programmatic access isn’t available or the console is just easier.

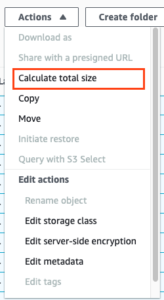

I couldn’t face either manually adding file sizes up, parsing a screenshot to help me find the largest folder and I, by sheer luck, stumbled upon this wonderful addition to the console. A calculate size option!

To use it, simply select the folders you want to analyse and you’ll be presented with a report that you can then sort to find the largest. For us it showed that over 18TB was being held in one folder which allowed us to do some really quick housekeeping and cost optimisation.

This is a sample of the report you’ll see:

This feature was updated in 2021 so I must have just missed it. Goes to show you never stop learning.

Be aware that doing this does call a list charge so if you have very large number of objects this might impact your costs.

Enjoy!

![]()